X algorithm open source is the rare developer story that pulled in backend engineers, creators, crypto people, Rust fans, and every person who has ever yelled at a timeline.

The repo is real. The code is public. The README says the system combines in-network posts with out-of-network retrieval, then ranks candidates through a Grok-based transformer. GitHub shows a May 15 push, a fast-moving star count, and a small enough repository that you can actually read the shape of it over coffee.

And still, after reading it, my first reaction was not “wow, now we know how X works.” It was more like: okay, now we know which parts X is comfortable showing us.

That difference matters. Especially when half the internet wants to turn any public ranking code into a cheat sheet for reach.

X Algorithm Open Source Is A Real Drop

The repository is not empty theater

The official xai-org/x-algorithm repository describes itself as the core recommendation system behind the X For You feed. That alone makes it worth a look. Most social feed debates happen through rumor, screenshot archaeology, and creators comparing reach charts like they are reading weather patterns.

Here we at least get a map.

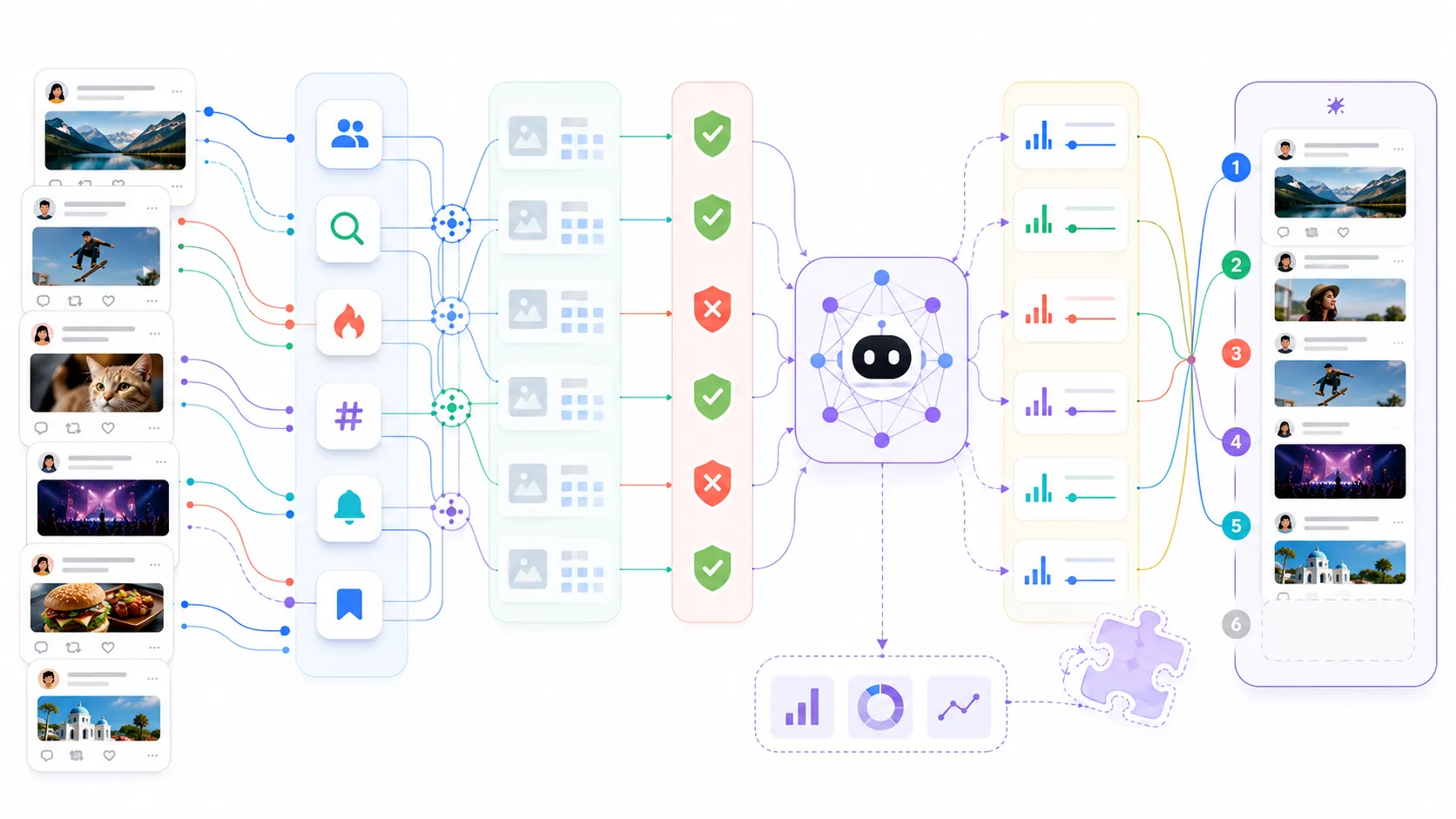

The current README lays out a pipeline with Home Mixer as the orchestration layer, Thunder for in-network candidates, Phoenix retrieval for out-of-network posts, hydration stages, filtering, scoring, and selection. It also says the May 15 update added a runnable end-to-end inference path, Grox content-understanding components, ads blending, query hydrators, candidate hydrators, and extra candidate sources.

That is not nothing. If you build recommender systems, feed ranking, search, moderation tooling, or even normal product discovery surfaces, there is useful architecture to read here. The interesting part is not just the transformer. It is the way the system decomposes the boring work around it: sources, hydrators, filters, scorers, side effects, and cacheable stages.

Honestly, that is the part I liked most.

Everyone talks about the model. The production system lives in the plumbing.

The shape is very 2026

The README says the system moved away from hand-engineered relevance features and relies on a Grok-based transformer to learn from engagement history. That is exactly the kind of claim that sounds inevitable in 2026. Less manual feature work. More representation learning. More model-mediated policy and ranking.

But the implementation shape is more grounded than the hype. There are Rust services. There are Python model pieces. There are candidate pipelines. There are filters that still sound painfully familiar: duplicates, old posts, self posts, blocked authors, muted keywords, previously seen posts, visibility filtering.

This is the funny thing about modern AI infrastructure. The pitch is “the model learns everything.” The system still has to remember that people mute keywords, block accounts, repost the same thing, and get bored when the same author fills the feed.

I do not mean that as criticism. I mean it as a useful reminder.

If your team is building an AI-ranking system, the model does not delete product rules. It moves the fight to a different layer.

The Most Interesting Part Is What You Still Cannot Audit

Public code is not the same as production transparency

The repo gives developers enough to understand the rough path from candidate retrieval to ranking. It does not automatically prove that the public code matches production behavior in every important way.

That is the gap people keep arguing about.

BeInCrypto framed the criticism from crypto users pretty sharply: the visible code is useful, but the exact production weights, deployed model details, and monthly update discipline are still the trust boundary. Some of that criticism may be self-interested. A lot of reach complaints are. But the engineering question is fair.

A ranking pipeline without production weights is like a CI system without the failing test logs. You know where the decision happens. You do not necessarily know why this specific decision happened.

The README shows a weighted scorer where predicted actions become a final score. That is the right abstraction. But the weights are the argument. A like, a reply, a dwell event, a profile click, a report, and a block are not morally or product-wise equivalent. The product decides what to reward, what to punish, and how hard.

The public code can show the socket. It may not show the voltage.

The model artifact question is bigger than it looks

The May 15 README notes mention a pre-trained mini Phoenix model, packaged through Git LFS, so people can run out-of-the-box inference without training a model first. That is genuinely helpful for developers who want to inspect the flow instead of spending a weekend fabricating fake checkpoints.

But a runnable small model is not the same as the live model.

That distinction is not some pedantic open-source purity test. It is the whole question. If the production feed depends on a larger model, different training data, private policy classifiers, private safety thresholds, or fast-changing operational rules, then the public repo is a reference architecture. Useful, but not a full accountability surface.

I am fine with reference architecture. I am less fine when people pretend reference architecture means total transparency.

The practical read is simple: treat the repo as a high-signal technical artifact, not as a magic decoder ring for your personal timeline.

Developers Should Read The Pipeline Before The Hot Takes

Home Mixer is the useful mental model

If I were walking a teammate through this repo, I would start with Home Mixer. Not Phoenix.

Phoenix is the shiny part because it has the model. Home Mixer is where the product becomes a system. It has to hydrate context, pull candidates from multiple sources, enrich posts, drop bad candidates, score the rest, diversify the selection, and return something fast enough to feel alive.

That is the piece most teams under-build.

I keep seeing products add “AI ranking” as if the model call is the feature. Then the candidate set is weak, metadata is stale, filters are bolted on late, and nobody can explain why a result appeared. The X repo is a useful corrective because it makes the surrounding machinery visible.

This is close to the same lesson I wrote about in Claude Code large repos. The model matters, sure. The harness decides whether the model can do useful work in a live system.

Same pattern, different surface.

Try reading it like a production checklist

If you want a useful way to poke the repo, skip the viral thread summaries for a few minutes and ask boring questions:

git clone https://github.com/xai-org/x-algorithm.git

cd x-algorithm

find . -maxdepth 2 -type d | sort

rg "Scorer|Hydrator|Filter|Source" .

rg "favorite|reply|repost|click|report|block" .

That gives you a better feel for the architecture than any “I found the secret to going viral” post.

The abstractions tell you what the system authors think changes often. Sources change. Hydrators change. Filters change. Scorers change. Side effects change. That is where feed teams need extension points because product pressure never stops. Ads need placement. Safety needs policy. New formats need metadata. Bad actors adapt. Creators optimize around yesterday’s signal.

A feed is not one algorithm. It is a moving negotiation between user value, business goals, safety, latency, and adversarial behavior.

That is why I do not buy the easy version of the story where open-sourcing the repo either solves everything or proves nothing. Both takes are lazy.

The Creator Cheat Sheet Angle Is Mostly Noise

Optimization advice gets weird fast

The funniest reaction to this drop is watching people immediately turn the repo into growth advice.

Some of that is harmless. If the system predicts engagement probabilities, then yes, posts that create actual replies, clicks, profile interest, and sustained attention probably matter more than low-effort volume. Shocking. Good posts beat spam. Who knew.

But this gets weird fast because creators want deterministic recipes and ranking systems are built to resist deterministic recipes. The moment a public signal becomes a public hack, it becomes a spam vector. Then the product has to discount it, contextualize it, or punish the abuse pattern around it.

So when someone says “the code proves replies are the only thing that matters,” I mostly hear “this person has not operated an adversarial product surface.”

The real takeaway is more boring and more durable: make content the system can classify clearly, avoid behavior that looks like repetitive engagement bait, and understand that negative feedback can matter as much as positive feedback.

The open source win is for system literacy

For developers, the win is not that we can now reverse-engineer the perfect post.

The win is that a major social product exposed enough of its feed architecture to make the conversation less mystical. You can point to candidate sourcing. You can point to hydration. You can point to multi-action prediction. You can point to post-selection filters. You can ask where the weights live, where the deployed models differ, and where policy enters the path.

That is healthier than arguing from vibes.

It also gives smaller teams a decent pattern library. If you are building a marketplace feed, a developer-tool recommendation surface, a document ranking system, or an internal knowledge feed, there are useful design ideas here. Separate retrieval from ranking. Keep filters explicit. Make hydrators composable. Treat negative signals carefully. Do not let the model be the only place product logic exists.

I would not copy the architecture blindly. X has X-shaped problems. Most products do not need this much machinery.

But I would absolutely steal the habit of making the pipeline legible.

What I Would Watch Next

The next update matters more than the first one

The first drop gets attention. The second and third drops establish whether this is a maintained transparency project or a one-time artifact.

GitHub currently shows May 15 activity, and the public README talks about that update directly. Good. Now the question is whether future production changes keep showing up with enough detail to matter. If X keeps refreshing code and notes, developers will have something real to follow. If the repo goes quiet while the feed keeps changing, the trust value decays quickly.

That is not unique to X. Any company can open-source a snapshot. Maintaining an accountability surface is the harder commitment.

I am also watching the model boundary. If the public artifact stays small and representative while production models evolve privately, then developers should keep calling it what it is: a reference implementation with some production-shaped bones. Still useful. Not complete.

The best result would be boring

The best outcome is not a viral cheat sheet.

The best outcome is that more developers get comfortable reading ranking systems as systems. Not just “the algorithm.” Not just “the model.” The whole path: candidates, metadata, filters, prediction, weighting, business rules, safety, caching, and observability.

That is where the real lessons are.

My take after reading the repo is pretty simple. X algorithm open source is a meaningful technical artifact. It is also not enough to settle transparency debates. Both things can be true.

And honestly, that tension is why the story is worth writing about.