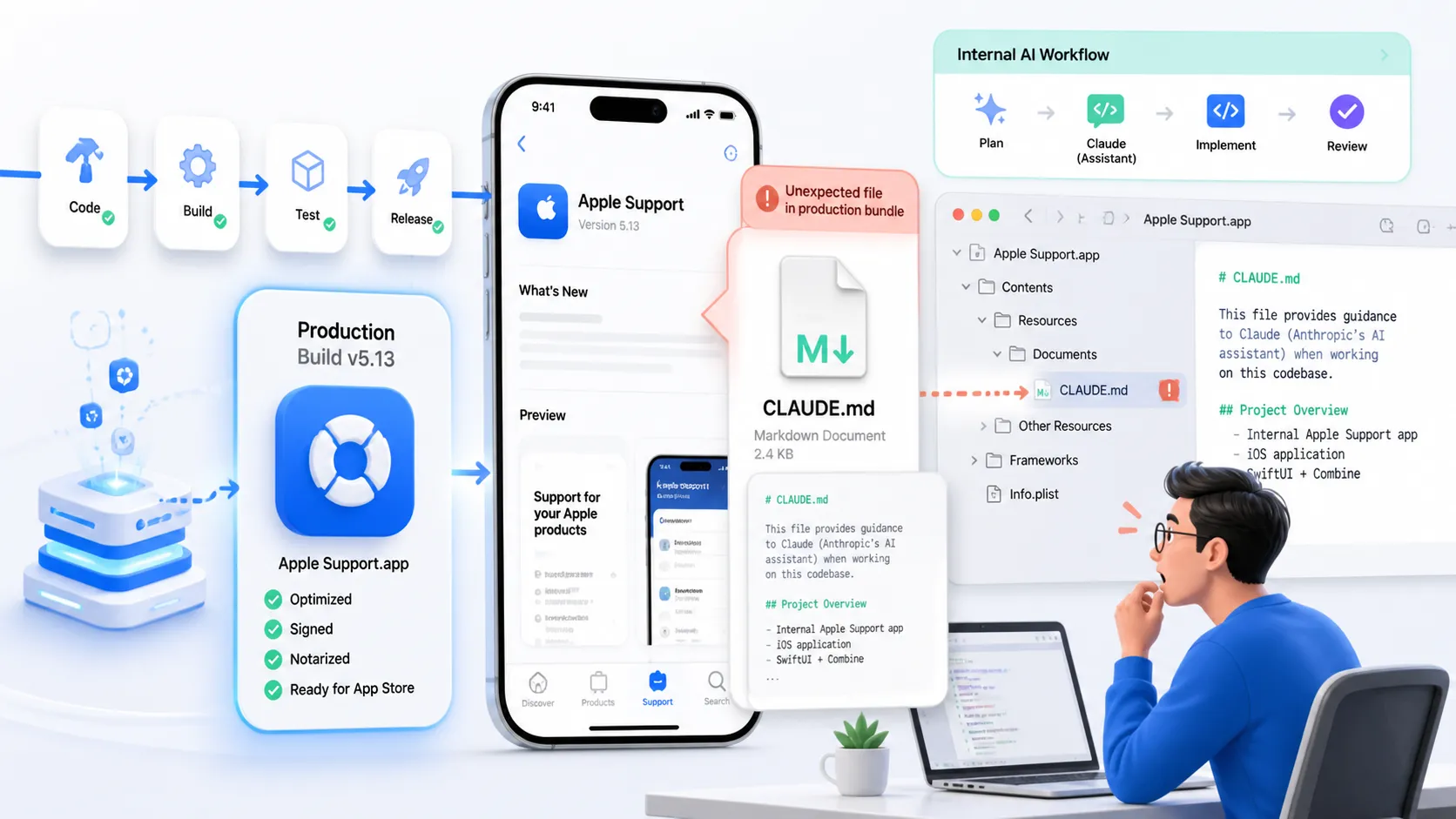

Apple shipped Claude MD files inside Apple Support v5.13, and that one packaging mistake told developers more about its internal AI workflow than a month of polished keynote language ever could. I saw the screenshots, rolled my eyes for about five seconds, and then had the more useful reaction: okay, what does this actually reveal?

The answer is not “Apple secretly let AI write the whole company.” That is the lazy version. The more interesting version is that a company famous for tight release control apparently let project-level Claude context files slip into a production app bundle, right after months of increasingly public signs that Anthropic sits much closer to Apple’s developer workflow than people used to assume.

That combination is why this story moved fast. It was fresh, it was funny, and it landed right in the middle of the 2026 developer anxiety stack: agentic coding, release hygiene, prompt context leaking into artifacts, and the uncomfortable realization that even the most locked-down shops are now normalizing AI instructions as part of daily engineering work.

Apple Shipped Claude MD Files Into Production

The leak itself is tiny but the signal is not

The core incident is simple. Posts on GeekNews and Reddit started circulating screenshots showing that Apple Support v5.13 shipped with Claude.md files bundled in the app. One follow-up post pointed to an emergency v5.13.1 update that removed them. If that detail holds up, this was not just a funny artifact sitting around forever. It was seen, noticed, and then cleaned up quickly.

What made developers latch onto it is the specific kind of file involved. Anthropic’s own docs describe project CLAUDE.md files as shared instructions in version control for build commands, standards, architecture decisions, naming conventions, and common workflows. In other words, these are not random cache files. They are workflow scaffolding for how Claude should understand and operate on a codebase.

That matters because once a file like that ships, it stops being an abstract “AI is helping somewhere” story. It becomes direct evidence that AI context management has entered the release surface.

The funniest part is also the most normal part

Honestly, none of this shocked me. The shocking part would have been Apple not using tools like this by now. We already knew the company had moved in this direction. MacRumors covered Xcode 26.3 when Apple enabled Anthropic and OpenAI agents directly inside Xcode, and 9to5Mac later amplified Mark Gurman’s claim that Apple “runs on Anthropic” for a lot of internal product development. Once you accept that baseline, seeing Claude.md in a shipped artifact stops looking impossible and starts looking like a very modern release-process mistake.

The leak is embarrassing, sure. But it is embarrassing in the same way shipping debug config, stale source maps, or internal feature flags is embarrassing. It points less to science-fiction takeover and more to build hygiene that did not fully keep up with the new toolchain.

What The Claude MD Files Actually Tell Us

They tell us Apple is using AI with project structure, not just ad hoc prompts

This is the part I think a lot of people miss. A company-wide or team-wide habit of maintaining CLAUDE.md means AI usage is not just some engineer pasting code into a side chat at midnight. It suggests a more repeatable system. Anthropic’s docs say a project CLAUDE.md is where teams put workflow instructions and architectural context so the assistant starts each session with the right operating model.

That is a much bigger deal than “Apple uses Claude.” Lots of companies use Claude. The interesting part is whether they use it in a way that becomes operationally legible. A context file means someone cared enough to formalize expectations. That is process. That is standards. That is an engineering organization trying to make agent behavior more consistent across sessions and contributors.

And honestly, that sounds exactly like what a company at Apple’s scale would need. A loosely prompted AI assistant is cute for demos. It is not how you support large teams shipping real software on a schedule.

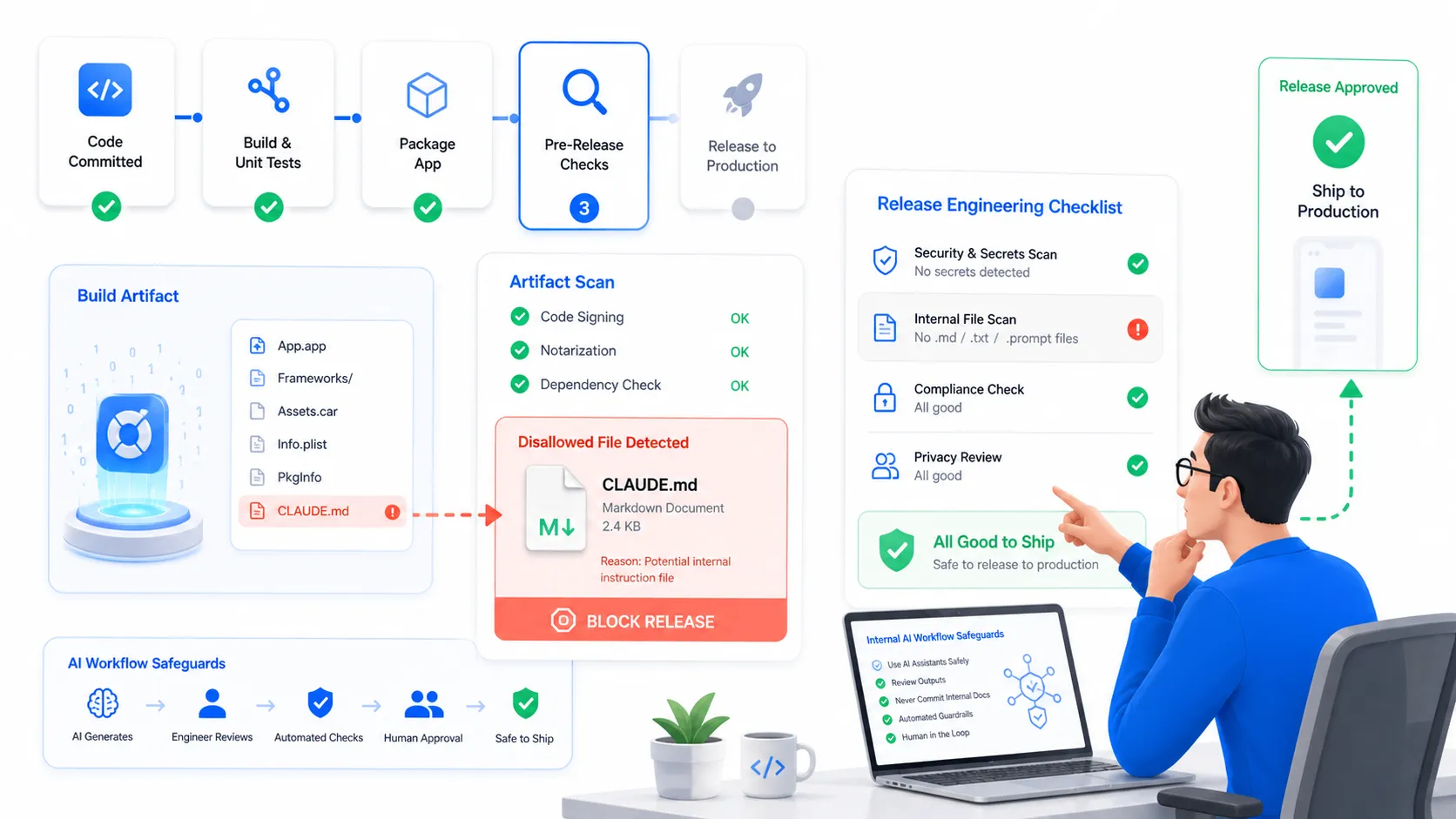

They also tell us the packaging boundary is now part of secure AI usage

The instant reaction online was basically “lol vibe coding at Apple.” I get why. But that framing is too shallow. The better lesson is that AI context files become another thing release systems need to classify correctly.

We already know how to think about this in other categories:

- Test fixtures should not ship.

- Secrets should not ship.

- Internal docs should not ship.

- Debug toggles should not ship.

Now add another line:

- Agent instruction files should not ship unless intentionally public.

That sounds obvious once stated, but obvious things still get missed whenever tooling evolves faster than release discipline.

Why This Matters More Than One Awkward App Update

Every dev team is drifting toward instruction rich repos

This Apple leak is getting attention because the company is Apple, but the underlying pattern is everywhere now. Repos increasingly carry AI-specific context: CLAUDE.md, agent rules, model configs, test instructions, review hooks, MCP wiring notes, deployment caveats, weird human warnings that future teammates used to keep in their heads, and now put in machine-readable project memory.

That is not a side effect anymore. That is the workflow.

If your team uses AI seriously, your repository stops being just source code plus docs. It becomes source code plus execution guidance for humans and agents at the same time. That is useful. It also expands what counts as a releasable artifact and what counts as internal operational knowledge.

The Apple story is really about release engineering catching up

The leak does not prove AI made Apple sloppy. It proves release engineering rules built for the pre-agent era are not enough by default.

If I were reviewing this kind of setup on a real team, I would want three things immediately:

rg -n "CLAUDE\\.md|AGENTS\\.md|rules|\\.claude" . \

-g '!node_modules' -g '!vendor'

find . -path '*/CLAUDE.md' -o -path '*/.claude/*'

zipinfo -1 build-artifact.zip | rg "CLAUDE\\.md|\\.claude|AGENTS\\.md"

That is not a fancy security program. That is the minimum viable embarrassment filter.

And yes, it should sit right next to checks for keys, tokens, debug assets, and internal-only metadata. If agents are part of production engineering, agent context deserves production-grade release controls.

The Part I Keep Coming Back To

Apple is not unusual anymore and that is the real headline

The weirdest thing about this story is how un-weird it actually is. A year ago, “Apple accidentally shipped Claude context files” would have sounded like a parody of AI coding culture. In May 2026, it sounds like a plausible side effect of normal enterprise development.

That shift matters more than the leak itself.

MacRumors reported that Xcode 26.3 gives Anthropic and OpenAI agents direct access to project structure, builds, tests, snapshots, and Apple documentation. 9to5Mac then echoed Gurman’s claim that Anthropic powers a lot of Apple’s internal product work. Against that backdrop, the Claude.md slip does not feel like an isolated stunt. It feels like a glimpse of the hidden plumbing that now sits behind modern software teams.

If anything, the leak confirms that “AI assisted coding” has already matured into “AI structured development workflow.” People keep arguing about whether engineers are still writing code. Meanwhile, the more practical shift is that teams are spending more time designing the context around code: instructions, conventions, boundaries, and automated checks so agents do useful work without breaking the release lane.

The dev takeaway is boring and therefore worth doing

So no, I do not think the important conclusion is “Apple got caught vibe coding.” I think the useful conclusion is nastier and more practical: your build system now needs an opinion about AI context artifacts, because your engineers are going to create them whether management has a grand policy memo or not.

That means:

- classify agent instruction files as internal-by-default

- lint for them in release bundles

- decide which ones belong in version control and which do not

- treat prompt context like operational documentation, because that is what it is

This leak will probably fade in a week. The workflow behind it will not. That is why I keep staring at it. Apple did not accidentally reveal some magical secret sauce. It revealed that the biggest companies are now doing the same thing smaller teams already are: teaching AI how their codebase works, then trying to make that safe enough to ship around.