Ubuntu DDoS attack coverage usually sounds like a boring availability story until you notice which services were having a bad day.

The headline version is simple: Canonical said its web infrastructure was under a sustained cross-border attack, and a bunch of Ubuntu-facing services got slow or unreachable around May 1. TechCrunch reported that Ubuntu developers were discussing failures around the security API and related Canonical sites. Ars Technica wrote that the outage limited Canonical’s ability to communicate security guidance to users.

That is the part that matters.

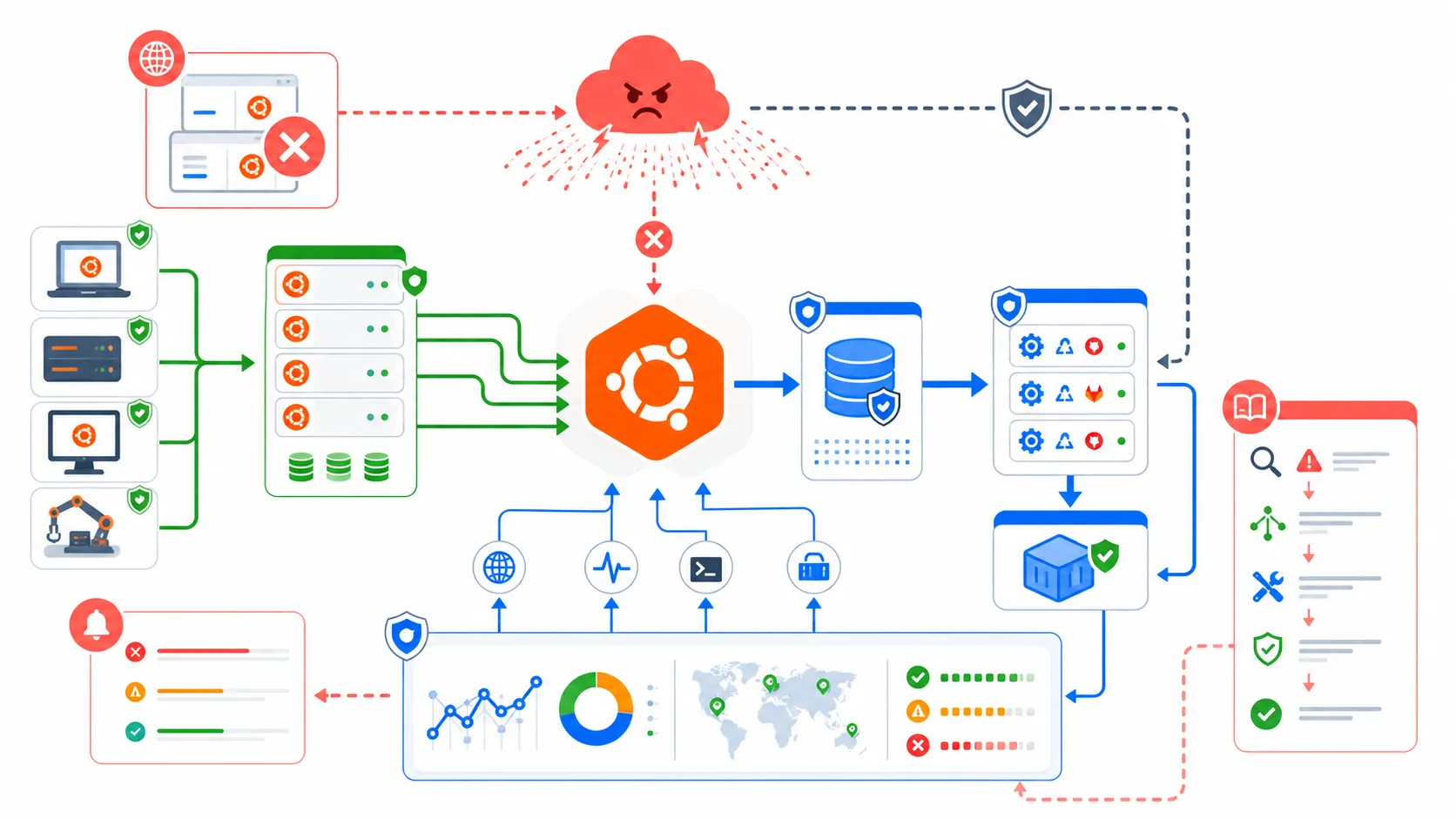

Not because this looks like a package compromise. It does not. The useful read is more annoying: when a distribution’s advisory pages, package infrastructure, status page, SSO, Launchpad, Snap Store, mirrors, or CVE APIs wobble at the same time, normal Linux maintenance starts feeling much less automatic than the dashboards make it look.

And yeah, that is uncomfortable if you run Ubuntu anywhere important.

Why This Hit Harder Than A Normal Outage

Security updates are not just another web property

A blog being down is annoying. A docs page being down is annoying. A package mirror or security notice pipeline being flaky during a fresh kernel vulnerability window is different.

The timing is what made this story sticky. On the same day people were watching the Canonical outage, Ubuntu had a public security thread for CVE-2026-31431 Copy Fail, a high severity Linux kernel local privilege escalation affecting releases before 26.04. The Ubuntu Security Team said mitigations were available through kmod, with kernel packages to follow.

That does not mean the DDoS caused a patch failure for every host. It means the operational shape was ugly. People wanted to know whether they were exposed. People wanted advisories. Automation wanted metadata. Mirrors and security endpoints were part of the conversation. That is exactly when reliability becomes security.

This is the difference between “the website is down” and “my patch workflow depends on a chain of services that are currently getting punched.”

The status page problem is its own lesson

The funniest bad thing about outages is that the status page becomes the one page everybody needs, and sometimes it is also affected or hard to trust from the outside.

IsDown’s Canonical tracker showed repeated incidents across Canonical components over May 2 and May 3, including security.ubuntu.com, archive.ubuntu.com, Livepatch API, Launchpad-related services, and assets. It also listed components back as OK later. That kind of third-party view is useful, but it also proves the point: teams were checking aggregators, Reddit threads, news reports, forums, and official fragments just to understand the state of a core dependency.

I do not want my incident room asking, “Which unofficial page is the least stale right now?”

That is not a dunk on Canonical. Running public Linux infrastructure at this scale is hard. But it is a reminder that status communication has to be treated as separate infrastructure, not a decorative page hanging off the same blast radius.

The Dependency Nobody Wants To Admit

Ubuntu is part of the production control plane

Most teams do not talk about Ubuntu like they talk about AWS, Cloudflare, GitHub, or Stripe. They should.

If your fleet uses Ubuntu images, your CI runners use Ubuntu, your Docker base images start from Ubuntu, your build agents pull from Ubuntu mirrors, and your security scanner consumes Ubuntu CVE feeds, then Canonical infrastructure is not background scenery. It is in your production control plane.

I wrote about a similar feeling in the Docker Spain Cloudflare block post. The lesson was not “Cloudflare bad” or “Docker bad.” The lesson was that infrastructure dependencies become visible only when they stop being boring. This Ubuntu incident has the same flavor. Everyone loves invisible plumbing until the plumbing is the incident.

Here is the small table I would put in front of a platform team:

| Dependency | Normal Day | Bad Day |

|---|---|---|

security.ubuntu.com |

Patch metadata and packages just work | Security maintenance waits or retries |

| Launchpad and PPAs | Builds and packages flow quietly | Custom package pipelines stall |

| Ubuntu Security API | Scanners enrich CVEs automatically | Risk dashboards lose freshness |

| Status and advisories | Humans get guidance fast | Incident teams hunt across channels |

None of this is exotic. That is why it matters.

A DDoS is not a breach, but it can still create risk

I saw a few reactions treating this like it either was a breach or did not matter. Both are lazy.

A DDoS is not the same as someone modifying packages. UbuntuPIT’s recap made that point clearly: there were no public reports of user data, package integrity, or internal Canonical systems being compromised. Tom’s Hardware also noted that there were no reports of compromised package repositories or ISO images.

Good. That distinction matters.

But availability failures still create security risk. If teams delay updates, skip checks, bypass mirrors, disable scanners, or trust random workarounds because official paths are down, attackers do not need to alter the upstream package. They can wait for humans to get impatient.

That is the real production lesson here. Availability is part of your security model whether your policy document admits it or not.

What I Would Change On Monday

Stop assuming upstream will always be online

The practical response is not panic. It is boring resilience work.

If you run real Ubuntu fleets, I would check whether your patching and build paths have a fallback that does not require people to invent one during an outage. Local mirrors. Cached package repositories. Tested mirror switching. Scanner behavior when CVE feeds are stale. Build isolation when a PPA is unreachable. Runbooks that say what is allowed and what is absolutely not allowed.

Try running something like this from a controlled maintenance host and see what breaks when endpoints are unavailable:

set -euo pipefail

for url in \

https://security.ubuntu.com/ubuntu/ \

https://archive.ubuntu.com/ubuntu/ \

https://status.canonical.com/ \

https://ubuntu.com/security/notices

do

printf '%s ' "$url"

curl -fsS --connect-timeout 5 --max-time 15 -o /dev/null "$url" && echo ok || echo failed

done

That script is not magic. It is just enough to start the conversation. The better version is wired into your monitoring, knows which endpoints your org actually depends on, and pages the right team before a release train blocks.

Treat Linux distro dependencies like vendor dependencies

I know this sounds obvious, but most companies do vendor risk management for SaaS apps and then hand-wave the OS supply chain because it feels like public infrastructure.

That gap is getting harder to defend.

Canonical is a vendor in your stack if your systems depend on Canonical’s infrastructure. So ask the same boring questions you would ask of any vendor path:

- Which services do we depend on directly?

- Which ones are runtime critical, build critical, or patch critical?

- What stale window is acceptable for CVE metadata?

- Can we prove package integrity when switching mirrors?

- Who can approve an emergency mirror or cache workaround?

- What do we do if official advisories are unavailable but social channels are noisy?

This also connects to the PyTorch Lightning supply chain attack from last week. That incident was about poisoned packages. This one is about availability. Different failure mode, same uncomfortable direction: developer infrastructure is now security infrastructure.

The Part I Keep Thinking About

The fix is not glamorous

The best answer to this kind of event is almost aggressively unexciting.

Mirror critical packages. Cache what you can. Pin base images intentionally. Keep emergency apt configuration documented. Monitor the endpoints you depend on, not the generic homepage. Separate status communication from the affected surface. Teach build pipelines how to fail closed instead of quietly grabbing whatever mirror responds first.

It is not the kind of work that gets a shiny launch post. It does not demo well. Nobody claps because your CVE cache degraded gracefully for six hours.

But this is the work that keeps a DDoS from becoming a patching incident, a patching incident from becoming a compliance incident, and a compliance incident from becoming a late-night archaeology project across CI logs.

Ubuntu will recover. Canonical will harden things. The news cycle will move on.

The useful question for the rest of us is simpler: if the Linux infrastructure you quietly depend on gets noisy for a day, does your production system keep behaving like an adult?