Context Mode is the first MCP server in a while that made me stop scrolling and actually open the repo instead of mentally filing it under “cool demo, probably annoying in practice.”

That reaction changed fast today. On May 3, 2026, GeekNews pushed Context Mode to the top of its front page, and the timing was not random. The repo is active right now, the official site is live, the maintainer already shipped a v1.0.0 milestone back on March 7, and the GitHub API on May 3 shows 12,071 stars with fresh updates still landing the same day. That is not “old repo discovered by one newsletter” energy. That is real momentum.

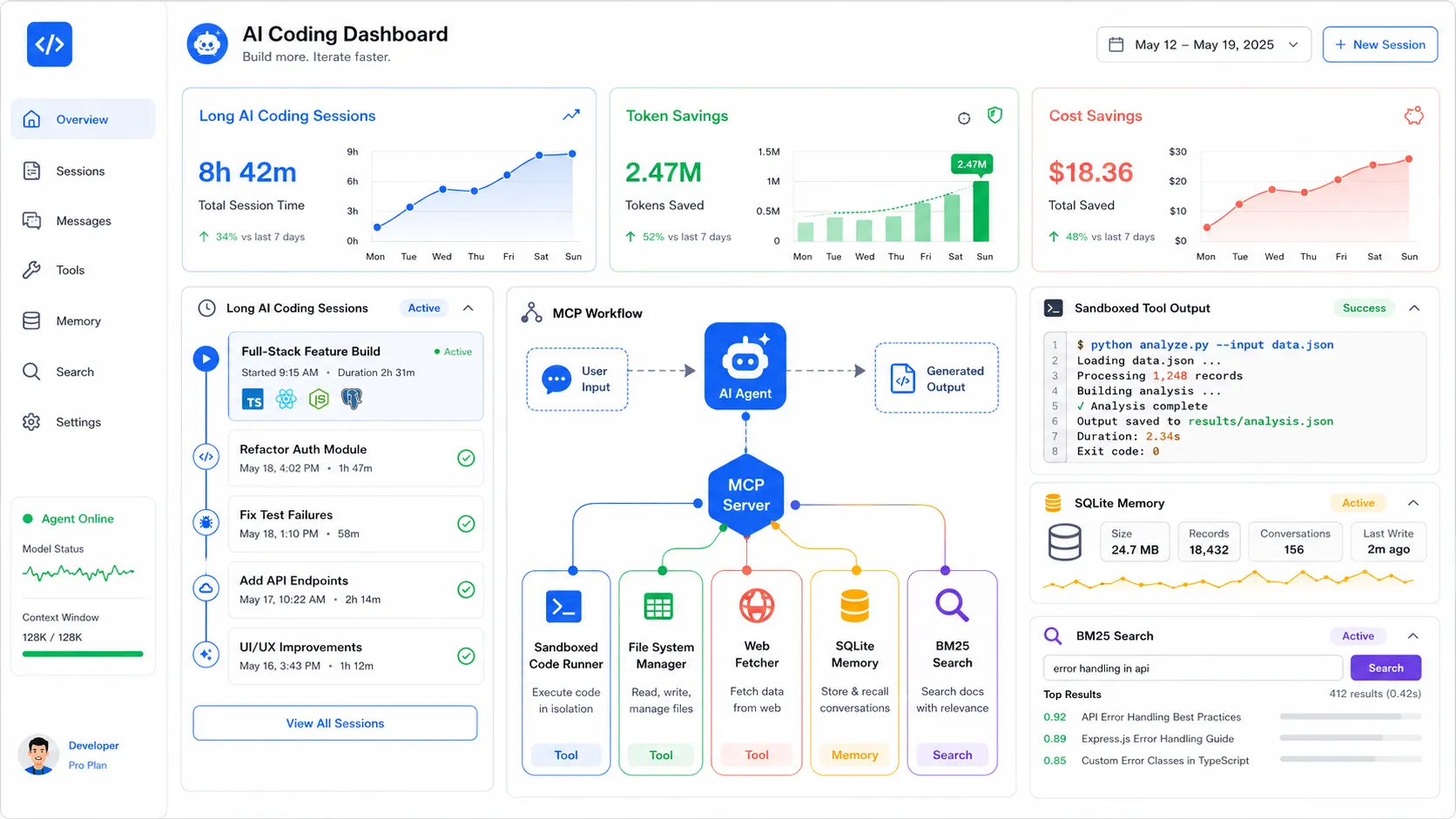

What makes the story more interesting is that the pitch is not some vague “better prompts” promise. Context Mode is selling a very concrete fix for a very real developer pain: raw tool output keeps flooding AI coding sessions until the agent gets slower, dumber, or both. The repo claims a Playwright snapshot can cost 56 KB, twenty GitHub issues can cost 59 KB, and a normal noisy session can chew through context so fast that 30 minutes feels like a soft time limit.

Honestly, that part tracks. If you use any serious agent workflow with shell commands, logs, test failures, browser snapshots, and web fetches, you already know the model is not really failing because it cannot code. A lot of the time it is failing because the working memory got polluted by garbage.

Why Context Mode Broke Out Today

GeekNews gave it a same day spike, but the repo was already loaded

The May 3 spike matters because it is fresh. GeekNews published the topic at 2026-05-03 09:31:02 +0900, and the summary that spread there is extremely legible: protect the context window, extend session life from roughly 30 minutes to roughly 3 hours, keep event history in SQLite plus FTS5, and make the model “think in code” instead of shoveling raw files into chat.

That is the kind of tooling pitch developers instantly understand because the pain is already familiar. Nobody needs a long abstract lecture to understand why a bloated context window is bad. We have all watched an agent lose the thread halfway through a repo task and then pretend it is still doing fine.

This also does not look like a repo that only woke up because of one aggregator. The official v1.0.0 release post framed the project as a move from a single-client MCP server to a broader multi-platform context engine. The maintainer later wrote in issue 134 that there was no marketing budget, no DevRel team, and no growth machine behind it. That is exactly why today’s spike feels more like delayed demand than manufactured hype.

It landed right as harness engineering became the real conversation

The second reason the topic feels hot right now is timing. The market is clearly moving away from “which model is best” as the only question. The more useful question is what Addy Osmani called agent harness engineering: prompts, tools, hooks, context policies, sandboxes, feedback loops, and all the boring surrounding machinery that decides whether a model is usable for real work.

That framing matters a lot here. Context Mode is not trying to beat Claude, GPT, Gemini, or Copilot at model quality. It is trying to make whichever model you already use behave less like a goldfish during long, messy coding sessions.

That is a better story than yet another benchmark flex.

| Signal | What I see | Why it matters |

|---|---|---|

| Fresh front page spike | GeekNews put it at the top on May 3 | The topic is live, not stale |

| Official maturity signal | v1.0.0 shipped on March 7 |

This is past toy stage |

| Current repo traction | 12,071 GitHub stars on May 3 | Developers are actively paying attention |

| Broader industry framing | Harness engineering is now mainstream discussion | The problem category got bigger than one tool |

What Context Mode Actually Does

It treats raw tool output like the real enemy

The core idea is simple and kind of brutal: stop feeding the model raw junk when raw junk is not the actual task.

The README says Context Mode sandboxes tool output, indexes it locally, and sends back only compact results instead of dumping the whole thing into the model context. It claims 315 KB of raw output can become 5.4 KB, roughly 98 percent savings. It also pushes a workflow philosophy I actually like: if the agent needs counts, summaries, or extracted structure, it should write code to compute them rather than reading 50 files like a sleepy intern.

That is one of those ideas that sounds obvious the second somebody says it clearly.

If you have read my earlier MCP guide, you already know the protocol itself is not the magic part. The hard part is what kind of data you let through, when you let it through, and how much of it the model really needs. Context Mode is basically a strong opinion about that boundary.

The session continuity piece is bigger than the token savings

The 98 percent number is what gets the clicks. The session continuity part is what feels like the actual product.

According to the repo, every file edit, git operation, task, error, and user decision gets tracked in SQLite and retrieved with FTS5 plus BM25 when the conversation compacts. That matters more than people think. Losing tokens is annoying. Losing working state is what actually wrecks long-running agent work.

This is why the repo pitch keeps talking about 30 minute sessions becoming 3 hour sessions. It is not only about compression. It is about surviving compaction without turning the next 20 minutes into a confused re-onboarding loop.

I think that is the piece many developers will care about once they move past the headline. Cheap summaries are nice. Durable task memory is where the workflow starts to feel different.

| Claim from the repo | Why I care more than the marketing line |

|---|---|

| Sandboxed output instead of raw output | This reduces context pollution immediately |

| SQLite plus FTS5 session memory | This helps after compaction, not just before it |

| Think in code instead of read everything | This matches how good human engineers already work |

| Hook support across multiple platforms | This makes it portable instead of one-client only |

The 3 Hour Claim Needs A Boring Test

The numbers are strong enough to take seriously

I would not blindly trust any repo claiming 98 percent improvement at anything. That said, the specific examples here are concrete enough that I would absolutely test them instead of dismissing them.

A Playwright snapshot going from 56.2 KB to 299 B is believable if the system is returning intent-ranked snippets instead of the full accessibility dump. Twenty GitHub issues going from 58.9 KB to 1.1 KB is believable if the system is extracting only issue metadata and relevant text. A session stretching from 30 minutes to 3 hours is believable if you combine output sandboxing, stricter compression, and durable state recovery.

None of that proves it will work on your repo. It does prove the claim is specific enough to measure.

If I were evaluating this at work, I would keep it painfully boring:

# try this on one noisy repo before declaring victory

# 1. pick one bug hunt with logs and browser snapshots

# 2. pick one repo research task with lots of file reads

# 3. compare context use, latency, and reorientation after compaction

# 4. keep the same model, same repo, same task shape

That is the real test. Not whether the README sounds smart. Whether the agent stays sharp on your actual mess.

The risk is not failure, it is hidden failure

There is one caveat I would keep in view the entire time: any system that aggressively compresses or filters tool output becomes part of your trust boundary.

That does not mean Context Mode is unsafe. It means the evaluation standard should be higher than “wow, token savings.” If the summarization layer hides the one ugly stack trace line or the one weird config diff that mattered, your agent can become confidently wrong with better manners.

So yes, I like the philosophy. I also think the right mindset is not worship. It is instrumentation. Measure what the model sees. Measure what it misses. Check whether the savings still hold when the repo gets weird.

That point actually lines up with the broader harness engineering theme. Better harnesses unlock more capability, but they also become more of the product. Once that happens, the harness deserves the same skeptical testing you would give any other dependency sitting in the critical path.

Where I Think Context Mode Is Actually Useful

Big noisy workflows will feel this first

If your agent workflow lives on short prompts and tiny files, you may not care. If your workflow includes Playwright, shell output, log spelunking, giant repo scans, or repeated web fetches, this starts sounding immediately practical.

That is why I think the current attention is justified. The MCP ecosystem got bigger fast, but the hidden tax was always context bloat. Every new tool made agents more capable and more forgetful at the same time. Context Mode is getting traction because it attacks that contradiction directly.

I can especially see it landing with teams doing:

- browser-heavy debugging

- large monorepo exploration

- long review sessions

- multi-agent task splits that keep handing off partial state

- cost-sensitive coding loops where token waste compounds fast

Small clean repos may not need another layer

I would also not pretend this belongs in every setup.

Some teams are going to install this, feel clever for two days, and realize the simpler fix was better prompts, fewer tools, or a stricter working style. That is fine. Not every workflow needs a local indexing layer, hook system, and extra moving parts.

This is where the maintainer’s own framing is useful. In that March 16 issue asking the community to help spread the word, the pitch was not “everyone on earth must use this.” It was “developers using AI coding agents keep hitting the same context wall.” That is much more believable.

If you are not hitting that wall, you do not need the tool yet.

So Would I Install It

For serious agent work, yes, I would test it this week

My honest answer is yes. Not because I think one MCP server solved AI coding. Because this is one of the cleaner examples I have seen of someone attacking a real bottleneck instead of inventing a fake one.

The GeekNews spike on May 3, 2026 makes sense to me. The repo has live traction. The official material is concrete. The maintainer story feels real. The timing fits the industry shift toward harness quality over raw model tribalism. And the claim is easy to falsify, which I always respect.

I would not install it everywhere by default. I would absolutely benchmark it anywhere the agent keeps drowning in browser state, logs, or giant repo output.

The bigger lesson is that the harness is now half the job

That is the piece I do not think developers should miss while everybody passes around the 98 percent number.

Context Mode might win or lose in your environment. The bigger shift is that tools like this now exist because the bottleneck moved. We are no longer only choosing models. We are choosing memory behavior, routing rules, compaction recovery, safe execution patterns, and output discipline.

That is why this story feels bigger than one trending repo. It is another sign that the future of AI coding will be shaped less by model demos and more by the systems wrapped around them.

If you want the wider protocol backdrop behind this trend, start with my Model Context Protocol guide. If you want the next layer above that, my multi-agent orchestration guide is the better follow-up. Context Mode sits right between those two ideas, and that is exactly why it is suddenly hard to ignore.