Anthropic engineer hiring is the part of the AI coding story I cannot stop staring at today.

The loud version is easy to repeat: AI is writing the code now, the software engineer title is going away, and everybody should start learning how to manage agents instead of typing functions. That story has been bouncing around for months, but it got a fresh shove this week because a Reddit post in r/ClaudeAI framed the contradiction perfectly: Anthropic keeps talking like software engineering is being automated away, while its own jobs page still shows a lot of engineering roles.

Honestly? That is a better signal than another benchmark chart.

Benchmarks tell us what a model can do in a cleaned-up task harness. Hiring pages tell us what a company still cannot automate away. And right now Anthropic, the company most associated with Claude Code and agentic coding, is still hiring engineers across research, product, infrastructure, security, compute, reliability, sandboxing, developer productivity, and applied AI.

I do not read that as hypocrisy. I read it as the real shape of the transition finally leaking through the marketing.

Anthropic Engineer Hiring Is The Part Worth Watching

The contradiction is real, but it is not simple

CIO published a neat framing on May 4: software engineers are not being replaced so much as moved from typing code to orchestrating agents. The article points to Anthropic still having dozens of software engineering openings, including high-compensation senior roles, while the company also talks about Claude Code producing most internal code.

That sounds absurd if you think “software engineer” means “person who manually enters implementation text into files.”

But that definition has been too small for a long time.

Even before AI coding tools, the job was already about deciding what to build, finding the smallest useful change, understanding weird production constraints, reviewing tradeoffs, deleting bad abstractions, and carrying context across teams. The typing part mattered. It still does sometimes. But it was never the whole job.

Anthropic’s own careers page makes that obvious. As of this run, the public jobs page lists 71 open roles under AI Research and Engineering, 22 under Engineering and Design - Product, 23 under Software Engineering - Infrastructure, and 27 under Security. You do not hire that much engineering muscle because syntax has become precious. You hire it because the systems around AI code are becoming harder to operate.

The job is moving toward judgment

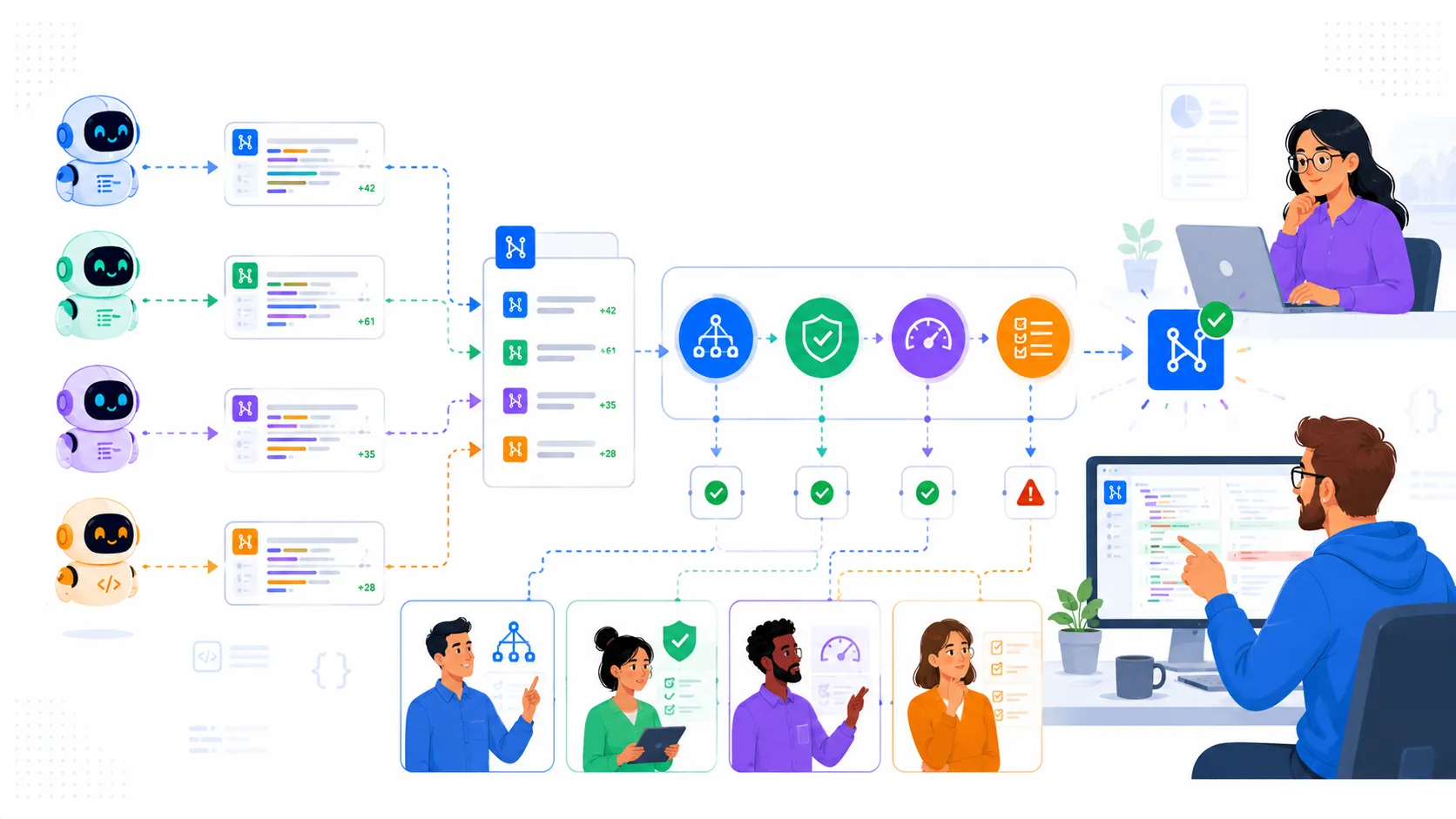

Anthropic’s 2026 Agentic Coding Trends Report says the quiet part out loud: software development is shifting from writing code to orchestrating agents that write code. It also says leaders are still balancing productivity against oversight, quality, and security.

That second sentence is where the work lives.

I keep seeing teams talk about agentic coding like the only question is “how fast can it generate the pull request?” That is the easy part now. The hard part is the part after generation:

- Is the change even pointed at the right problem?

- Did it preserve the boring production invariant nobody wrote down?

- Did it create a second architecture inside the first architecture?

- Did it pass tests because the tests were meaningful, or because the tests were also too optimistic?

- Can a human explain why this should ship?

That is software engineering. It just has a different amount of machine-generated text in the middle.

I wrote about the cost version of this in Uber AI Coding Costs Forced A Budget Reset In Four Months. The lesson there was not “AI is too expensive.” It was that successful adoption creates new control problems. Anthropic’s hiring page is the same lesson from a different angle. If the tools are good enough to flood teams with useful code, the bottleneck moves to review, architecture, reliability, security, and product judgment.

The AI Coding Replacement Story Is Missing A Layer

Code output is not shipped software

Fortune covered the viral Anthropic and OpenAI engineer claims back in January, including the idea that some top engineers no longer write code by hand and that Anthropic reports a large majority of internal code as AI-generated. That is wild. I am not going to pretend it is normal.

But “AI-generated code” and “production software” are not the same unit.

A model can draft a feature. It can write tests. It can refactor a subsystem. It can even chain tools, inspect failures, and make a second attempt. I use this stuff every day, and when it is on, it feels ridiculous. The first time an agent edits five files correctly while I am mostly reviewing intent, it does feel like the old loop cracked.

Then the same agent misses a hidden business rule, overbuilds a tiny change, adds a fallback that hides data loss, or invents a migration path that works only in the happy path.

That is the annoying middle. It is also why the replacement narrative keeps feeling too clean.

The skill premium moved up the stack

Moneycontrol’s February writeup captured the same tension: Anthropic says its AI writes nearly all internal code, but the company still says engineers matter because someone has to prompt, coordinate, decide, review, and plan. Strip away the PR language and that is basically the current job description for serious AI-assisted development.

Not “write every line.”

Also not “trust the model and go home.”

It is closer to this:

git diff --stat

git diff --name-only

rg "TODO\\(|fallback|temporary|skip validation|unsafe" .

bundle exec jekyll build --future

That little sequence is not magic. It is just the habit of treating generated code as a fast junior engineer with unlimited stamina and no production memory. You still inspect the size of the change. You still check the weird words. You still build the thing. You still ask whether the files touched match the intent.

The more agents generate, the more valuable that judgment becomes.

Why This Hit Developers So Hard

It touches job anxiety and tool reality at the same time

The Reddit thread worked because it compressed two truths that developers are already carrying around.

One truth: AI coding tools are genuinely useful. The jump from autocomplete to repo-aware agents changed my working rhythm. I can ask for a change, watch the agent inspect the codebase, and then spend more time reviewing the approach than filling in the repetitive parts. That is not hype. That is just Tuesday now.

The other truth: the labor market feels worse than the demo videos. Entry-level roles are harder to find. Teams are expected to ship more with less. Executives talk about productivity while quietly turning engineering headcount into a spreadsheet experiment. So when an AI lab says code is going away while also hiring expensive engineers, people hear a contradiction because their own companies are using the same language to justify cuts.

Both reactions are fair.

What is not fair is pretending the current tools remove engineering accountability. They do not. They increase the amount of implementation that can exist before anybody has deeply understood it.

That is a strange risk. Old teams were limited by typing speed, coordination overhead, and review capacity. New teams may be limited by review capacity alone. The code arrives first. The understanding catches up later, if the team has the discipline to make it catch up.

The best engineers become force multipliers and brakes

This is the uncomfortable part for career planning.

The engineer who only adds boilerplate by hand is in trouble. No need to soften that. Models are already good at a lot of that work, and the economic pressure will not politely wait for everyone to feel ready.

But the engineer who can turn fuzzy product intent into a tight implementation plan, supervise multiple agents, catch architecture drift, write the missing tests, and explain tradeoffs to humans is not obsolete. That person is suddenly sitting at the narrowest point in the pipeline.

That is why Anthropic hiring engineers while saying code is over does not look contradictory to me. It looks like the company is hiring for the layer above raw implementation.

What I Would Change On A Real Team

Stop measuring only generated code volume

If a team reports “80 percent of our code is AI-generated,” I want the next dashboard, not the victory lap.

Show review time. Show revert rate. Show incident rate. Show how many generated changes needed architecture intervention. Show how many PRs shipped with tests that failed to catch a regression. Show how often agents touched files outside the intended boundary.

Otherwise the metric is mostly theater.

The useful metric is not how much code the model wrote. The useful metric is how much correct, maintainable, product-aligned change the team shipped without increasing future drag.

Make agent supervision a real engineering skill

This is where I think a lot of teams will get the transition wrong.

They will hand everyone a coding agent, celebrate the first speed bump, and then discover six months later that the codebase grew strange new shapes. Not broken exactly. Just harder to reason about. More duplicated patterns. More local fixes. More “why did it do that?” moments.

The fix is not banning agents. That ship has moved.

The fix is making supervision explicit. Small plans before large edits. Diff ownership. Clear stop conditions. Better test gates. Local conventions encoded where agents can actually read them. Reviews that ask “does this fit the system?” instead of only “does this pass?”

That is not anti-AI. That is how AI coding becomes boring enough to trust.

So yeah, the headline says Anthropic still needs engineers after saying code is over. It is a good headline because it sounds like a gotcha.

But the real point is sharper: the code-writing era may be ending, and the engineering era is getting more expensive.