Anthropic finance agents are the first enterprise agent release this week that made me think, okay, the generic agent era might be ending faster than expected.

I do not mean generic agents are useless. I use them every day. But the pitch has always had a strange gap in the middle. Vendors say “agent,” developers hear “prompt plus tools,” compliance hears “please do not let this thing touch client work,” and everyone quietly hopes the demo path survives contact with real data.

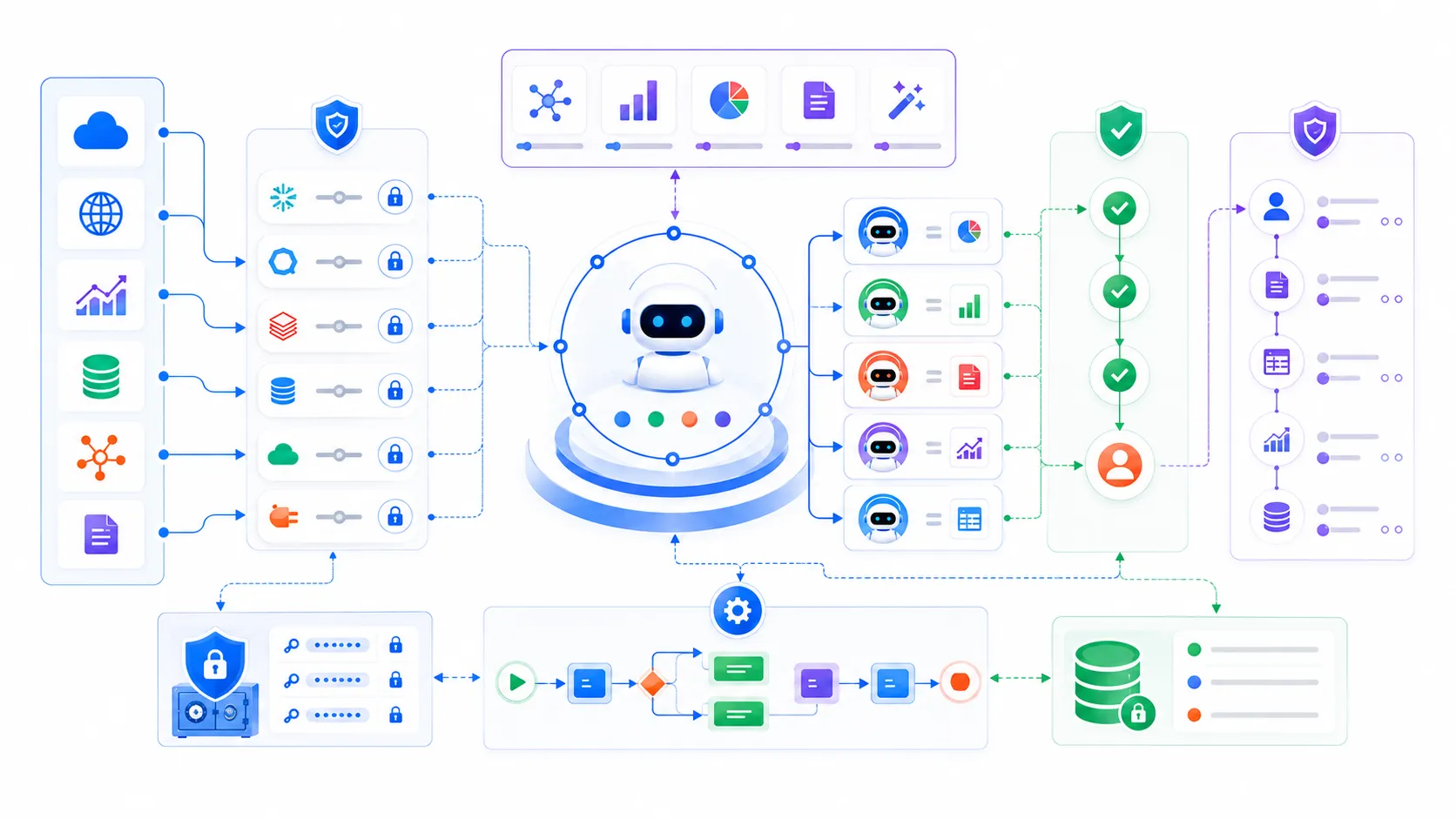

Anthropic’s new release is different because it is not selling one magic assistant. It is shipping ten finance-specific agent templates for things like pitchbooks, KYC screening, earnings reviews, valuation checks, model building, and month-end close. The interesting part is not that Claude can write a memo. We already knew models could draft decent text. The interesting part is the packaging: skills, connectors, subagents, Microsoft 365 add-ins, audit logs, managed credentials, and a GitHub repo full of runnable structure.

That is a much more serious signal than another leaderboard screenshot.

The target keyword here is simple: Anthropic finance agents. If you build internal AI tools, that phrase matters because it points at where enterprise agent development is probably going next. Not bigger chat windows. More opinionated workflow kits.

Anthropic Finance Agents Are Templates, Not Just Prompts

The useful part is the shape

Anthropic’s announcement says each finance agent template packages three pieces: skills, connectors, and subagents. That sounds like vendor vocabulary until you look at the repo layout.

The public GitHub repository has plugins/agent-plugins/, plugins/vertical-plugins/, managed-agent-cookbooks/, Microsoft 365 install tooling, validation scripts, deployment scripts, orchestration examples, and per-agent security notes. That matters. It means the artifact is not only a clever instruction file. It is closer to a deployable workflow skeleton.

That is exactly where most internal agent projects get messy.

A team starts with a prompt. Then the prompt needs a tool. Then the tool needs permissions. Then someone asks where the data came from. Then finance wants the model to use the firm’s valuation policy. Then compliance wants the tool calls logged. Then the agent needs to hand off a narrow subtask to another model call. Suddenly the “quick prototype” has a hidden platform inside it.

Anthropic is basically saying: stop rediscovering that platform one enterprise at a time.

Ten agents is less important than one pattern

The ten templates are useful marketing because they make the release concrete. Pitch builder, meeting preparer, earnings reviewer, model builder, market researcher, valuation reviewer, GL reconciler, month-end closer, statement auditor, and KYC screener are all easy to picture.

But I would not copy the list blindly.

The pattern is the part worth stealing:

claude plugin marketplace add anthropics/claude-for-financial-services

claude plugin install financial-analysis@claude-for-financial-services

claude plugin install pitch-agent@claude-for-financial-services

That shape is portable. A healthcare team could have intake reviewer, prior authorization checker, claims anomaly triage, and clinical note auditor. A logistics team could have lane pricing reviewer, exception resolver, customs document checker, and demand forecast analyst. A developer platform team could have migration planner, incident reviewer, dependency risk checker, and API docs maintainer.

The trick is not naming agents. The trick is deciding which workflows deserve a repeatable package with skills, connectors, approvals, and logs.

That is where the real product work starts.

Wall Street Is A Harsh Test Bed

Finance makes weak agent design obvious

I like finance as an agent test case because it punishes vague systems immediately.

An analyst does not need an agent that says “based on available information” fifteen times. They need a comps table that uses the right peer set, a model that preserves formula logic, a deck that updates when assumptions change, and a memo that can survive review by someone who knows the sector cold.

The same goes for compliance and operations. A KYC screener that confidently misses a source document is not cute. A month-end close agent that cannot explain the provenance of a journal entry is not “agentic.” It is an incident waiting for a calendar invite.

So the Wall Street angle is not just flashy. It forces the architecture to deal with data access, repeatability, permission boundaries, review loops, and auditability. Those are the parts most consumer agent demos politely avoid.

Axios framed the move as Anthropic deepening its Wall Street push, with ten agents aimed at common financial workflows. TechRadar focused on the “days rather than months” deployment claim. I am skeptical of that sentence in the healthy way. Days is plausible for a pilot. Real production still depends on your data, policies, approvals, and the number of old spreadsheets holding the business together.

But the direction is believable. If the agent template already has the skill bundle, data connector shape, subagent handoffs, and security notes, the first implementation meeting starts further down the road.

The Microsoft 365 angle is not a side quest

The Microsoft 365 part is quietly important.

Claude working across Excel, PowerPoint, Word, and Outlook sounds like a productivity-suite checkbox. For finance, that is actually the work surface. Models live in Excel. Pitchbooks live in PowerPoint. Memos live in Word. Client context and meeting chaos live in Outlook.

If context can move across those surfaces without the analyst re-explaining everything, the agent becomes less like a chat app and more like a workflow layer.

That is also where risk increases. Once an agent touches real files, real calendars, real inboxes, and real client deliverables, the governance story cannot be a slide after the demo. It has to be part of the runtime.

I wrote about cheap Claude API proxies earlier, and the trust-boundary lesson applies here too: the endpoint and the integration path decide who receives sensitive material. In finance, that material is not just source code. It is deal context, client names, portfolio assumptions, internal methodology, and sometimes market-moving analysis.

Developers Should Read The Repo Before The Press Release

The repo is the most honest artifact

The official announcement is useful, but the GitHub repo is where developers should spend time.

The layout tells you how Anthropic wants agent systems to be assembled. Agents are self-contained plugins. Skills live in vertical bundles and get synced into named agents. Commands expose explicit workflows. Connectors sit behind MCP servers. Managed-agent cookbooks add wrappers for headless deployment. Validation scripts check manifests and cross-file references.

That is not perfect. It is still a vendor-controlled ecosystem, and the real operational story depends on paid products, access controls, partner data, and customer-specific deployment work. But it is concrete enough to learn from.

The line that stood out to me is that everything is file-based: Markdown and JSON, no build step. That is boring in the best way. Agent configuration should be reviewable in pull requests. Skills should diff cleanly. A compliance note should not hide inside a dashboard nobody exports. If a workflow is important enough to automate, it is important enough to version.

This is where a lot of “agent platform” projects lose me. They create beautiful UIs for configuring behavior, then make it painful to audit what changed. Finance teams will not tolerate that forever. Neither should engineering teams.

Subagents are useful only when the handoff is narrow

Subagents are the spicy part of the release, but also the easiest part to abuse.

Calling another model for a specific subtask makes sense when the boundary is tight: comparables selection, methodology check, formula audit, source extraction, variance explanation. It gets ugly when every uncertain moment becomes another vague handoff.

My rule of thumb is simple. A subagent should have a smaller job, a smaller context, and a clearer success condition than the parent agent. If it does not, you probably created a more expensive way to be confused.

The financial-services repo hints at that discipline by tying subagents to named workflows and security notes. That is good. The hard part for teams copying the pattern will be resisting the urge to make a swarm because “multi-agent” sounds advanced.

Honestly, I would rather ship one boring agent with excellent connectors and audit logs than five theatrical subagents that nobody can debug.

The Bigger Shift Is Vertical Agent Engineering

Generic assistants are becoming the raw material

The uncomfortable thing for developers is that “agent builder” is becoming less impressive by itself.

Two years ago, wiring an LLM to tools felt advanced. Now the real question is whether the system understands a domain well enough to reduce review burden instead of increasing it. Can it use the right data source? Can it follow the firm’s conventions? Can it leave an audit trail? Can a senior person approve or reject the work without reverse-engineering the whole run?

That is why this release feels bigger than finance. It makes vertical agent engineering look like a normal software discipline. You define the workflow. You package the skills. You attach governed connectors. You specify handoffs. You test the outputs against domain expectations. You keep humans in the loop where money, clients, regulation, or reputation are involved.

The model matters, obviously. Claude Opus 4.7 leading a finance benchmark is useful information. But the system around the model is what makes the release interesting.

The next copycat wave will be noisy

Here is the thing nobody is talking about enough: this will create a lot of bad clones.

Every enterprise software vendor is going to want “ten ready-to-run agents” for their vertical. Some will be real. Many will be renamed prompts with a connector bolted on. The difference will show up in boring places: permission scoping, tool-call logs, validation scripts, reproducible deployment, source-linked outputs, rollback plans, and whether the user can inspect why the agent did something.

That is what I would evaluate first.

Not the demo.

Not the model name.

Not the number of agents.

Show me the repo shape. Show me how skills are versioned. Show me how connectors authenticate. Show me how subagent handoffs are constrained. Show me the audit log. Show me the failure mode when the data source is missing. Show me how a human says no.

If Anthropic finance agents push the market in that direction, I am glad. We need fewer magical assistants and more inspectable workflow systems.

The agents are not replacing financial professionals this week. That is the lazy headline. The real story is that AI vendors are starting to package domain work the way software teams package production systems: opinionated structure, governed inputs, reusable components, and reviewable output.

That is less dramatic than “AI analyst takes over Wall Street.”

It is also much closer to how this stuff will actually get adopted.