GPT Realtime 2 is the first voice-agent release in a while that made me stop treating speech as a thin wrapper around chat.

The old voice-agent stack was basically a latency sandwich. Speech to text, LLM call, tool call, LLM call again, text to speech, then a nervous hope that the user did not interrupt halfway through. It worked for demos. It got weird in production. The moment a customer changed their mind, spoke over the bot, gave a messy address, or asked the agent to do two things at once, the whole thing started feeling less like software and more like stage magic with a bad network connection.

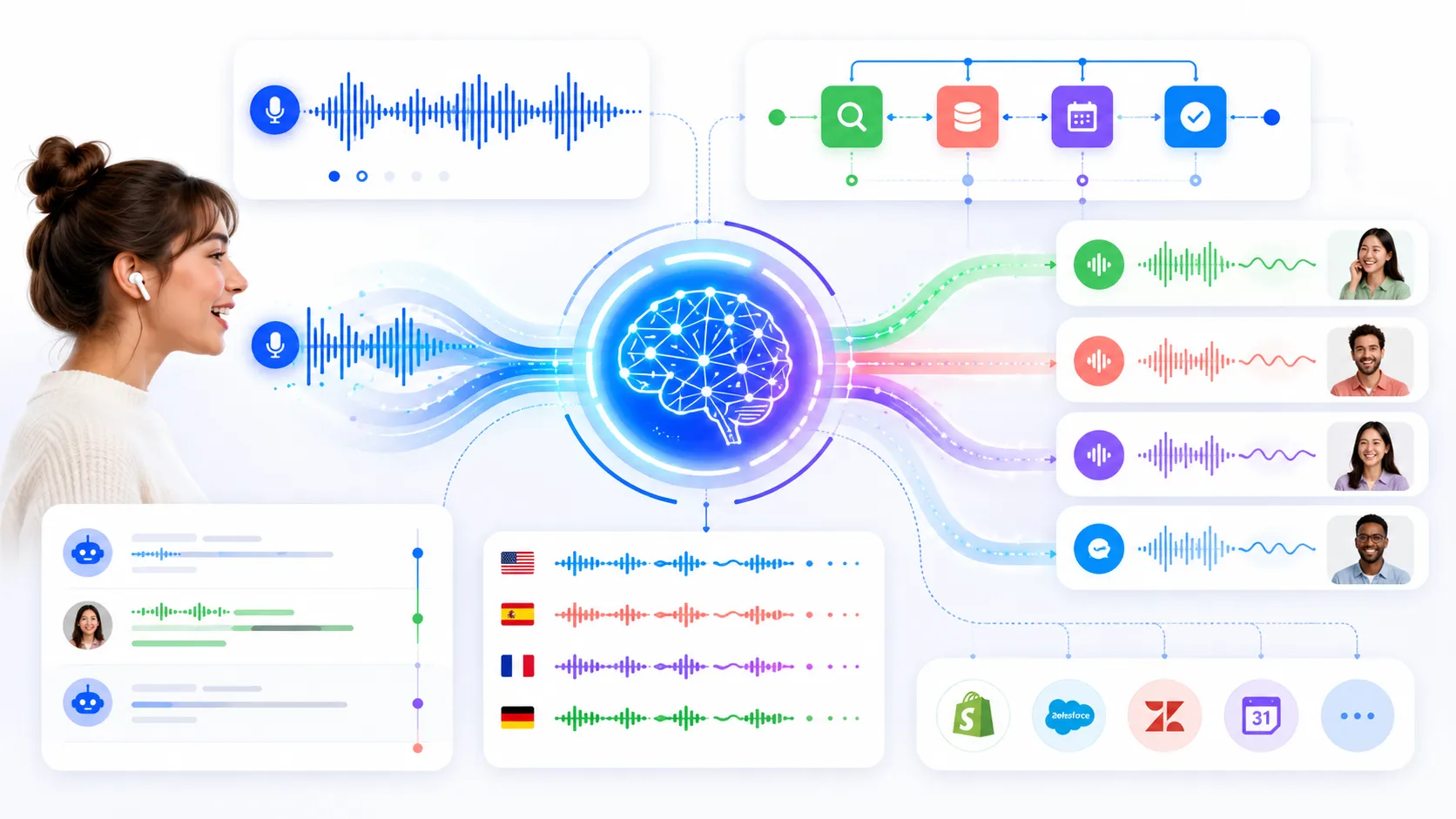

OpenAI’s new realtime voice model announcement changes the discussion because the company is not only shipping a better voice model. It is packaging reasoning, live translation, streaming transcription, tool use, and longer context into the same Realtime API story. That sounds like marketing until you look at the product shape. A voice agent that can reason while the conversation is still moving is a different thing from a chatbot that happens to speak.

I am still skeptical by default. Voice agents have burned a lot of teams. But this release is interesting enough that I would put it into a real prototype this week instead of filing it under “cool API video.”

GPT Realtime 2 Is Not Just Better Speech

Reasoning in voice changes the failure mode

The official GPT Realtime 2 model page calls it OpenAI’s most capable realtime voice model and lists the parts developers actually care about: speech-to-speech interactions, configurable reasoning effort, stronger instruction following, more reliable tool use, a 128K context window, and audio input/output.

That combination matters because most bad voice agents do not fail on pronunciation. They fail on state.

They forget what the user already said. They ask for confirmation at the wrong time. They keep talking after the user interrupts. They call one tool, then lose track of the actual task. They sound confident while doing something half-right. Anyone who has built support automation, appointment booking, sales qualification, or internal helpdesk workflows knows this pain.

So the useful question is not “does it sound human?” I honestly do not care. The better question is: can it keep a live task coherent while the user behaves like a normal person?

That is where GPT Realtime 2 looks more serious than the previous generation. OpenAI says it can handle harder requests, carry conversations forward, call multiple tools, recover more gracefully, and use adjustable reasoning effort. I read that as a direct answer to the production mess, not just a model-quality bump.

Translation and transcription widen the surface area

The release also includes GPT Realtime Translate and GPT Realtime Whisper. Translate handles speech from 70 plus input languages into 13 output languages. Whisper streams speech-to-text while people are still talking.

That trio is the actual story. Voice agents are not one product anymore. They are a bundle of surfaces: live support, translation, meeting notes, call center workflows, field service apps, accessibility tools, healthcare intake, travel support, sales calls, classrooms, and internal copilots that need hands-free interaction.

Some of those products only need transcription. Some need translation. Some need a full tool-using agent. The mistake would be forcing every use case through the most expensive and most agentic path because the demo looked cool.

The Production Bill Is Now Part Of The UX

Audio tokens are not vibes

Here is the part I would bring up before anybody gets too excited. The model page lists GPT Realtime 2 at 32 dollars per 1M audio input tokens and 64 dollars per 1M audio output tokens. Text input is cheaper, cached input is much cheaper, and OpenAI’s Realtime cost guide is pretty explicit that voice-agent sessions accrue tokens across modalities.

That means cost is not a finance footnote. It is architecture.

If your agent rambles, your bill goes up. If your system prompt is huge and uncached, your bill goes up. If you keep long histories without trimming, your bill goes up. If your VAD is noisy and creates extra responses, your bill goes up. If you choose high reasoning effort for every small turn, your bill goes up and latency probably gets worse too.

This is where voice agents get uncomfortable. A text chatbot can be verbose and still feel cheap enough. A realtime voice system converts every extra second into compute, latency, and user impatience.

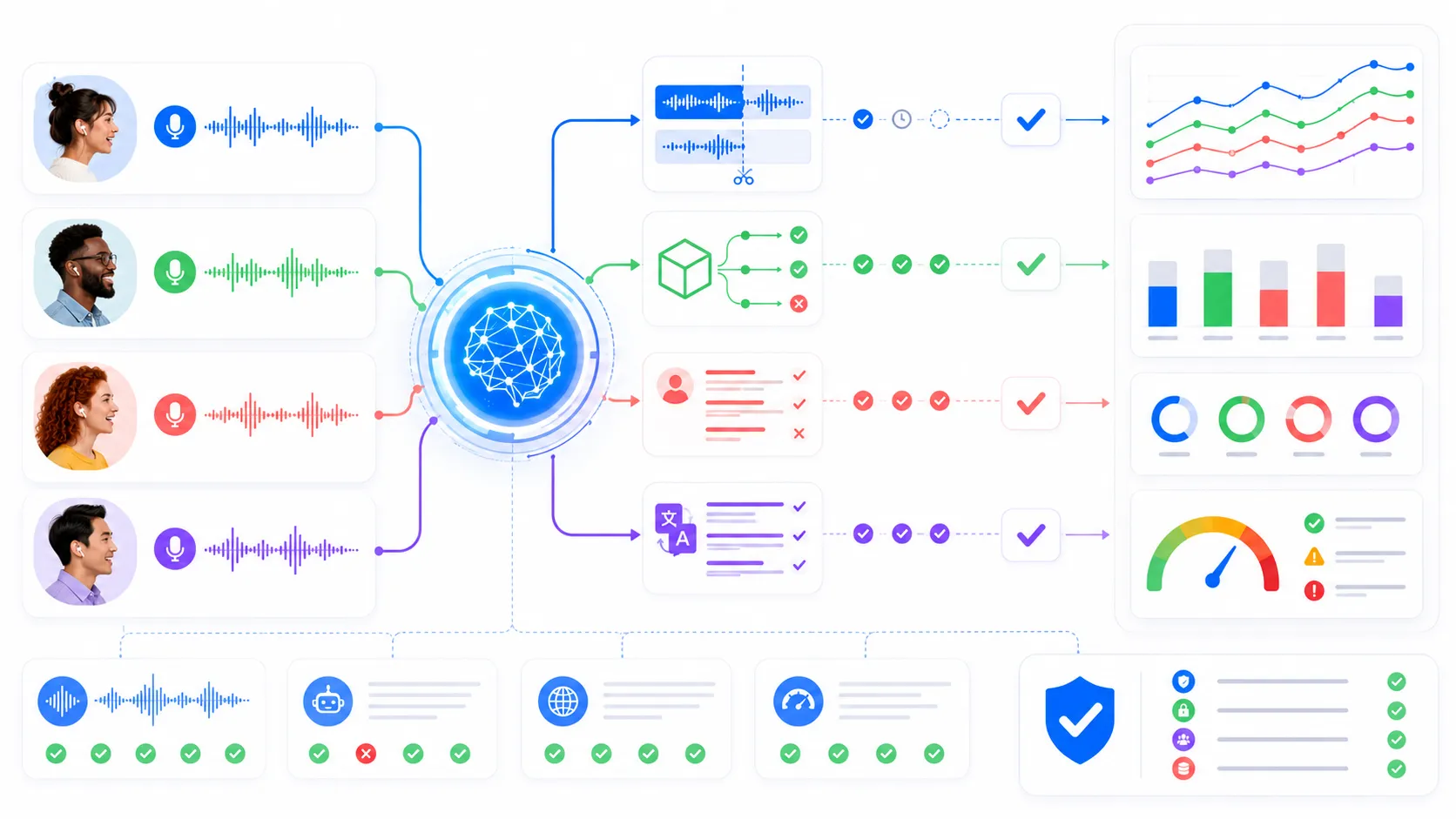

The boring tests matter more than the launch headline

I would not start with a benchmark. I would start with ugly test calls.

| Test | What I Want To See |

|---|---|

| User interrupts mid-answer | Agent stops, updates state, and does not repeat the old plan |

| Tool call takes too long | Agent explains the wait without hallucinating a result |

| User changes one constraint | Agent preserves the rest of the task instead of restarting |

| Background noise hits VAD | Session does not produce random billable nonsense |

This is the same lesson I keep running into with agents in general. The visible model quality is only one slice of the product. The control plane matters more. We hit the same theme in the Cloudflare agent deployment post: once agents can touch real systems, permissions, audit trails, and rollback plans become part of the feature, not paperwork.

The Migration Story Is Quietly Important

Realtime is moving from experiment to platform

The other signal is less flashy but probably more important for teams already building on realtime audio. Microsoft has a Realtime API preview-to-GA migration guide that talks about endpoint changes, event names, session configuration, and SDK compatibility. That is not the kind of thing normal users care about. Developers should.

When an API gets a migration guide, stable docs, pricing pages, model pages, and multiple connection methods, it is leaving the toy zone. The question shifts from “can I make a talking bot?” to “can I operate this without waking up every time a dependency changes?”

That is a healthier question.

I would check WebRTC first for browser and mobile experiences, WebSocket when the server needs more orchestration, and SIP only when telephony is actually the product. Picking transport casually is how teams end up debugging latency from the wrong layer for a month.

API only is the right starting point

The Reddit thread that picked up the release had the obvious question: is this live in the ChatGPT app, or is it API only? The answer from the thread was API only, at least for that discussion.

That actually feels right to me. Realtime 2 should be pressure-tested by developers building weird, specific workflows before it becomes a general consumer expectation. A product team building a support agent can define allowed tools, recovery language, escalation behavior, cost ceilings, and trace logging. A consumer voice assistant gets judged like magic. That is a much harsher environment.

Also, developers are better at finding the annoying edge cases. We will make it book the wrong calendar, translate a half-sentence, interrupt it with a fake address, run it through a flaky cafe connection, and then complain loudly when the transcript state gets weird. Good. That is the work.

What I Am Actually Changing On My Side

I would prototype voice agents with stricter gates now

If I were adding GPT Realtime 2 to a product this week, I would keep the prototype small and mean.

No giant “AI receptionist” scope. No full autonomous support agent on day one. I would pick one bounded flow where voice is clearly better than typing: rescheduling an appointment, collecting intake details, live translating a product walkthrough, or turning a messy field note into structured data while the person is still on-site.

Then I would build the boring rails first:

const voiceAgentPolicy = {

maxSessionMinutes: 8,

defaultReasoningEffort: "low",

highReasoningOnlyFor: ["policy_check", "multi_step_tool_plan"],

requireHumanHandoffFor: ["billing_dispute", "medical_advice", "legal_claim"],

logEvents: ["interruption", "tool_call", "handoff", "fallback"],

};

That is not glamorous, but it is the difference between “the demo talks” and “the product can survive Friday afternoon traffic.”

My gut read: GPT Realtime 2 does not make every app need a voice interface. Most apps still do not. But it does make the serious voice-agent category harder to dismiss. The model is better, the surrounding API surface is more complete, and the cost model is explicit enough that teams can design around it instead of pretending it will be cheap by default.

So yes, I am paying attention. Not because the launch headline is loud. Because the boring pieces finally look like they are lining up.