Claude API proxies are suddenly the cheap shortcut everyone has an opinion about, and the security angle is uglier than the discount.

The basic pitch sounds harmless. Someone offers Claude access at a massive markdown. You change a base URL, keep your existing SDK calls, and your coding agent keeps running. No corporate procurement fight. No region restriction headache. No surprise invoice from a weekend refactor session.

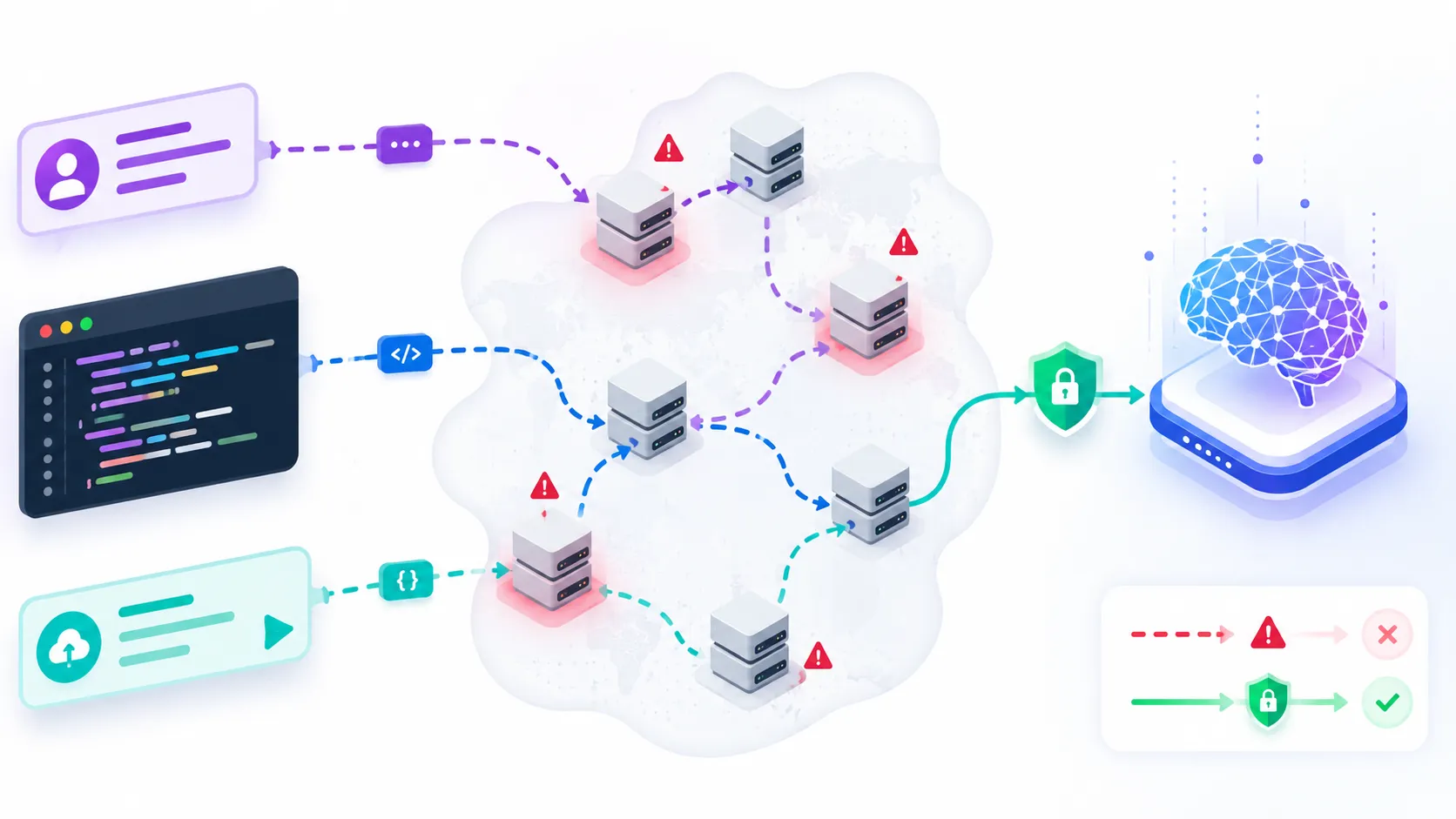

That is the sales copy. The actual system is an unknown middleman sitting between your repository context and the model provider.

The story caught fire after Zilan Qian’s ChinaTalk investigation described a grey market of Chinese “transfer stations” selling access to Claude at a fraction of official pricing. Tom’s Hardware picked it up with the developer-security headline: cheap access may come from account farming, model substitution, stolen credentials, and prompt logging.

I get why developers are tempted. AI coding tools burn tokens like crazy. But if a proxy is cheap because your prompts and outputs are part of the margin, that is not a discount. That is a data leak with a nice invoice.

Claude API Proxies Are Not Just Cheaper Endpoints

A base URL change can move your trust boundary

The weird thing about this risk is how small the technical change looks.

export ANTHROPIC_API_KEY="proxy-issued-token"

export ANTHROPIC_BASE_URL="https://some-cheap-proxy.example"

That feels like configuration. In practice, it moves your trust boundary.

Your codebase summary, issue text, stack traces, customer-shaped examples, internal service names, failed tests, shell output, and the agent’s correction loop now pass through an operator you probably cannot audit. If the tool is running in a repo, it may also see filenames, dependency names, API shapes, migration plans, and enough product logic to be useful to a competitor.

That is already bad for normal chat. It is much worse for coding agents, because agents send richer context than humans realize. A human might paste one function. An agent might send the failing test, relevant files, prior attempts, command output, tool call traces, and a reasoning-heavy transcript that basically explains how the system works.

I wrote a basic Claude and Gemini API key setup guide recently, and the boring advice there still matters: treat API keys like secrets. This proxy story adds another rule. Treat API endpoints like secrets too. The endpoint decides who receives the secret material.

The proxy can quietly swap the model

The model substitution part is what annoys me the most.

If I call an official endpoint and ask for a specific model, I can still complain about price, latency, rate limits, or product direction. But at least the contract is legible. With a grey-market proxy, the operator can route your expensive request to a cheaper model and relabel the response. You may think you are testing Claude Opus. You may actually be testing something else.

That breaks engineering judgment in a very sneaky way. You tune prompts against a fake target. You compare tools using bad data. You blame the wrong model for weak output. Or worse, you ship an internal workflow because it looked good during proxy-backed testing, then it behaves differently when moved to the official provider.

The ChinaTalk piece points to proxy audits where advertised models underperformed official APIs on benchmark-style checks. I do not even need the benchmark to be perfect to care. The risk is enough by itself: if the provider is opaque, you cannot prove what ran.

The Logs Are The Part Developers Should Fear

Coding prompts are training data with better labels

The scariest sentence in this whole story is not “90 percent off.” It is the idea that the logs may be the product.

Every useful coding-agent session is a compact training dataset. It contains a real task, real project constraints, failed attempts, human-approved corrections, tests that define success, and final code that probably works. That is much richer than random scraped code. It has intent and feedback baked in.

So if a proxy operator stores full prompts and responses, those logs are valuable. They can be resold, mined, filtered, turned into fine-tuning examples, used to clone product behavior, or just searched later when someone wants secrets.

This is why I am more worried about developer prompts than generic chatbot prompts. A normal chat can still be sensitive, obviously. But a coding-agent prompt often contains the moving pieces of a business. Even when it does not include passwords, it may include architectural facts that should not leave the company.

Here is the boring checklist I would run before letting any AI endpoint touch a private repo:

| Question | Why It Matters |

|---|---|

| Who operates the endpoint? | Anonymous infrastructure means no accountability. |

| Can the provider prove model identity? | Otherwise evaluation results are suspect. |

| Are prompts retained or resold? | Coding sessions are high-value training data. |

| Can we delete logs? | No deletion path usually means no governance. |

| Does legal approve the data route? | Vendor risk is not optional for customer code. |

Official warnings now match the grey-market shape

This is not only a consumer-scam story. It fits a larger model-extraction fight that has been building all year.

Anthropic’s distillation attack writeup said some campaigns used proxy networks with tens of thousands of fraudulent accounts, including one network managing more than 20,000 accounts at once. The company described large volumes of repeated, targeted prompts used to extract model capabilities.

The White House then escalated the issue into policy language. TechRepublic’s coverage framed it as industrial-scale model extraction using proxy accounts and jailbreak techniques. Defense One connected the same warning to Anthropic’s earlier claim of 16 million exchanges through about 24,000 fraudulent accounts.

I do not love every national-security frame around AI. It can get theatrical fast. But for developers, the practical lesson is simple: proxy infrastructure exists because there is money in breaking the direct relationship between model user and model provider.

Once that relationship is broken, you lose traceability.

What I Would Change In A Real Dev Team

Ban unknown AI base URLs in private repos

I would start with a blunt rule: no unknown AI proxy endpoints for private code.

That sounds strict until you compare it to the existing rules most teams already accept. You probably would not let a developer route production database traffic through an anonymous bargain VPN. You probably would not let CI upload build logs to a mystery pastebin because it was cheaper than S3. AI prompts deserve the same treatment.

The easy control is to search configs and shell histories for suspicious base URL overrides.

rg "ANTHROPIC_BASE_URL|OPENAI_BASE_URL|baseURL|base_url|apiBase|api_base" .

rg "claude|anthropic|openai|gemini|llm|ai" .env* docker-compose.yml package.json

That is not a full audit, but it catches the sloppy stuff. I would also check IDE settings, agent config files, CI variables, and any wrapper scripts used by developers. The dangerous setup is often not in application code. It is in the developer tooling around the app.

Separate cheap experiments from trusted work

There is still a place for experiments. If someone wants to test a cheap proxy on throwaway prompts, synthetic code, or public toy repos, fine. Just label it as untrusted.

What I would not do is let that endpoint anywhere near customer data, private repositories, unreleased product plans, internal incident notes, auth flows, or proprietary benchmarks. The proxy operator does not need a secret key to hurt you if you voluntarily send the blueprint.

I would also stop using proxy-backed sessions as serious model evaluations. If the endpoint cannot prove model identity and logging policy, its output is not evaluation data. It is a vibes sample.

The Discount Is The Signal

If the price makes no sense, inspect the margin

The part I keep coming back to is incentives.

Frontier models are expensive to run. Official providers argue about pricing all the time, but the basic shape is obvious: high-end inference costs real money. If a middleman can sell the same thing at a tiny fraction of the price, there has to be an explanation.

Maybe they are arbitraging subscriptions. Maybe they are farming credits. Maybe they are cycling compromised accounts. Maybe they are swapping models. Maybe they are monetizing logs. Maybe several of those are true at once.

None of those explanations make me want to send a private repo through the service.

This is where the clickbait version of the story actually undersells the engineering problem. “Cheap Claude steals your prompts” is scary, but the deeper issue is that AI agent infrastructure is becoming part of the software supply chain. It can read your code, write code, run commands, summarize incidents, and shape decisions. Treating that infrastructure as a random interchangeable endpoint is asking for trouble.

My working rule is boring and useful

If an AI endpoint touches private engineering context, it needs a real vendor relationship, a logging policy, a deletion story, an abuse contact, and a model-identity guarantee.

That will feel slower than pasting a cheaper base URL. It is also the difference between using an engineering tool and feeding your roadmap into an unknown market.

Cheap Claude API proxies may keep working. Some will probably be technically impressive. Some will be run by people who think they are just helping developers route around access blocks. I am not interested in moralizing every user who tried one.

But for private code, the answer is easy. The discount is not the feature. The discount is the warning label.